Loom - Fibers, Continuations and Tail-Calls for the JVM

I am excited about Project Loom. The project focuses on easy to use lightweight concurrency for the JavaVM. Nowadays, the JavaVM provides a one java thread to one OS thread model to the programmer. While it’s actually the current Oracle implementation, it used to be that many JavaVM versions ago, threads provided to the programmer were actually green threads.

Project Loom goes down that road again, providing lightweight threads to the programmer. Those lightweight threads are mapped to OS threads in a “many-to-many” relationship.

The current implementation of light threads available in the OpenJDK build of the JDK is not entirely complete yet, but you can already have a good taste of how things will be shaping up.

In this article, we propose to put at tasks those threads to apply Origami image processing filters to a set of roughly 2000 images, and compare the time needed for each implementation to complete the processing of the whole set of (mostly cats) images.

MORE WORK? ™

The core of the work to apply to each file is basically three steps:

- load an image

- apply a filter to the image

- save the image

Supposing the filter object will be loaded and available globally to the program, (to avoid extra loading time), the process function will be like the one below.

static String process(File image) {

Mat m = filter.apply(imread(image.getAbsolutePath()));

String path = "target/" + image.getName();

if (WRITE)

imwrite(path, m);

if (DEBUG)

System.out.printf("Processed image ... [%s]\n", path);

return path;

}

Nothing, really, surprising. Load the image into a Mat object, apply the filter, and save the filtered image into a new file. Along with those, we will have two static helper classes:

- One that wrap the process function in a Runnable’s run method, so we can use this in a Thread.

static class OrigamiTaskAsRunnable implements Runnable {

private final File image;

public OrigamiTaskAsRunnable(File image) {

this.image = image;

}

public void run() {

process(image);

}

}

- one other class making use of RecursiveTask, so we can use it for a pool distributed threading

static class OrigamiTaskAsRecursiveTask extends RecursiveTask<String> {

private File image;

public OrigamiTaskAsRecursiveTask(File image) {

this.image = image;

}

@Override

public String compute() {

return process(image);

}

}

Preparing the Editor and the Virtual Machine

You can use any editor you want of couse, here I describe the two short steps you need to do to use the newly downloaded IntelliJ.

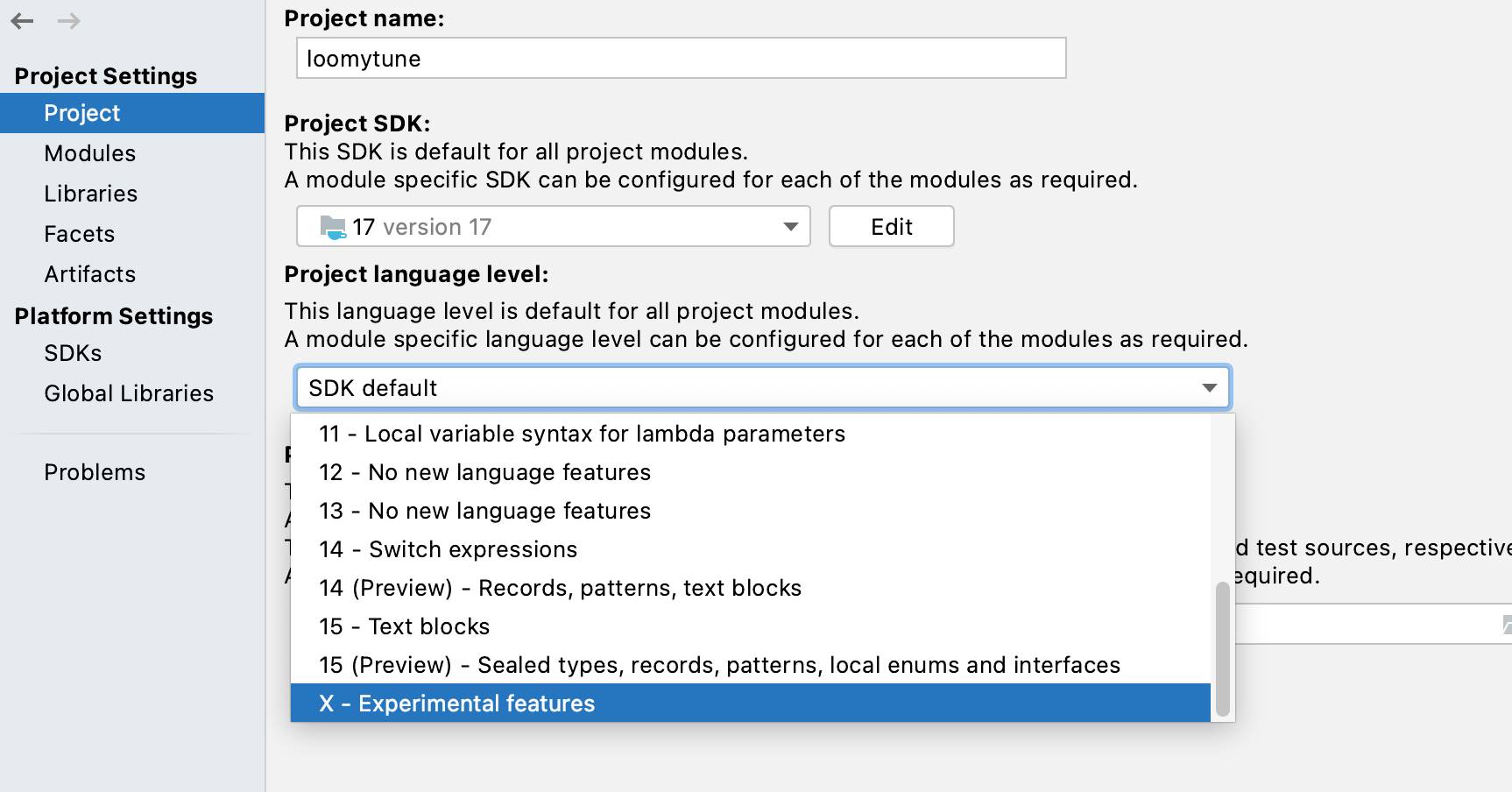

There is a need to manually enable experimental features in the project’s project language level, and this is done as shown in the screenshot below.

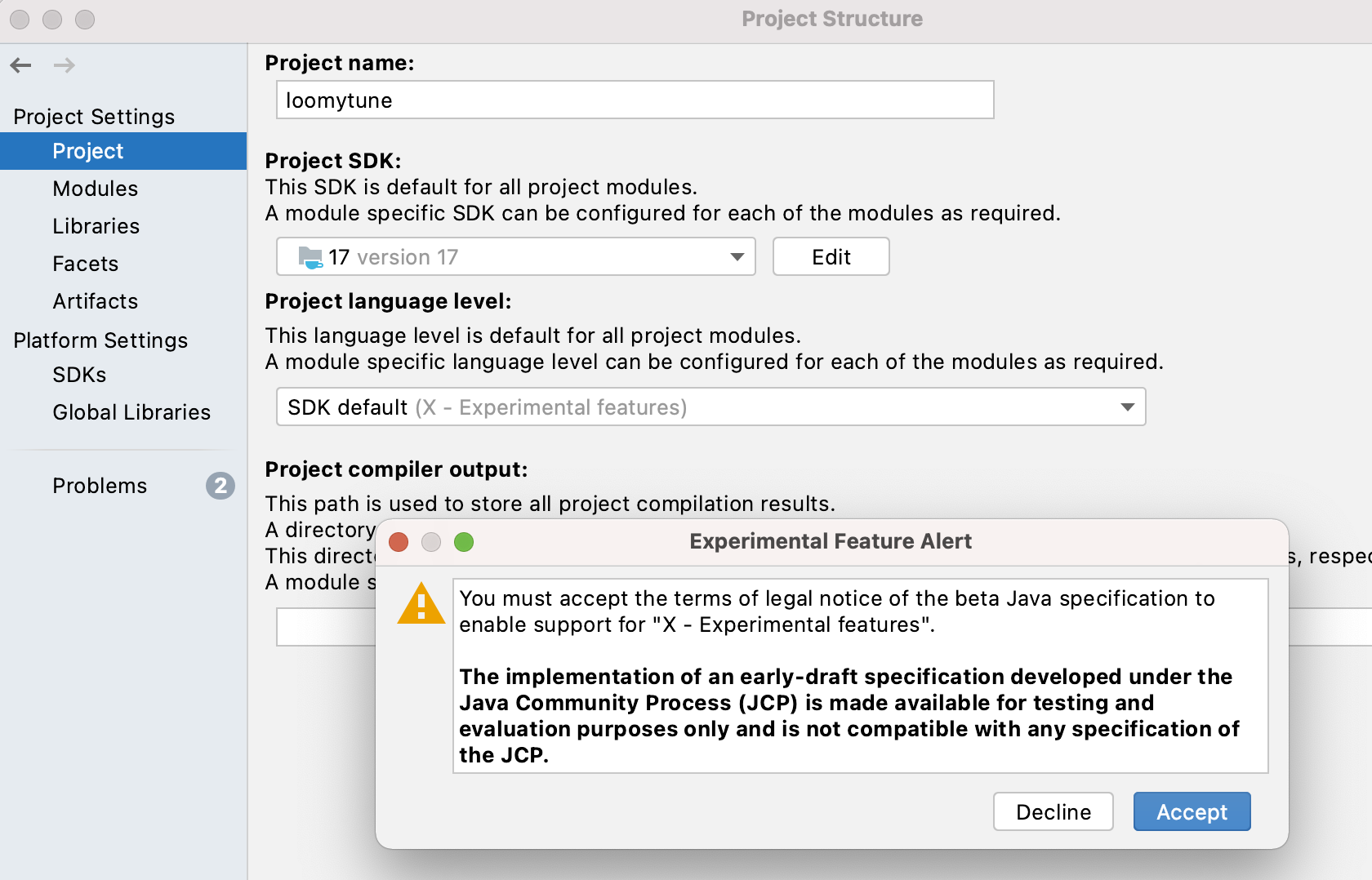

One extra step is to accept the Experimental Feature Alert notice/license, as shown in the picture below:

When using Maven, you would still also need the –enable-preview VM arguments in your pom.xml file when compiling as shown below:

...

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.8.1</version>

<configuration>

<source>17</source>

<target>17</target>

<compilerArgs>

<arg>--enable-preview</arg>

</compilerArgs>

</configuration>

</plugin>

...

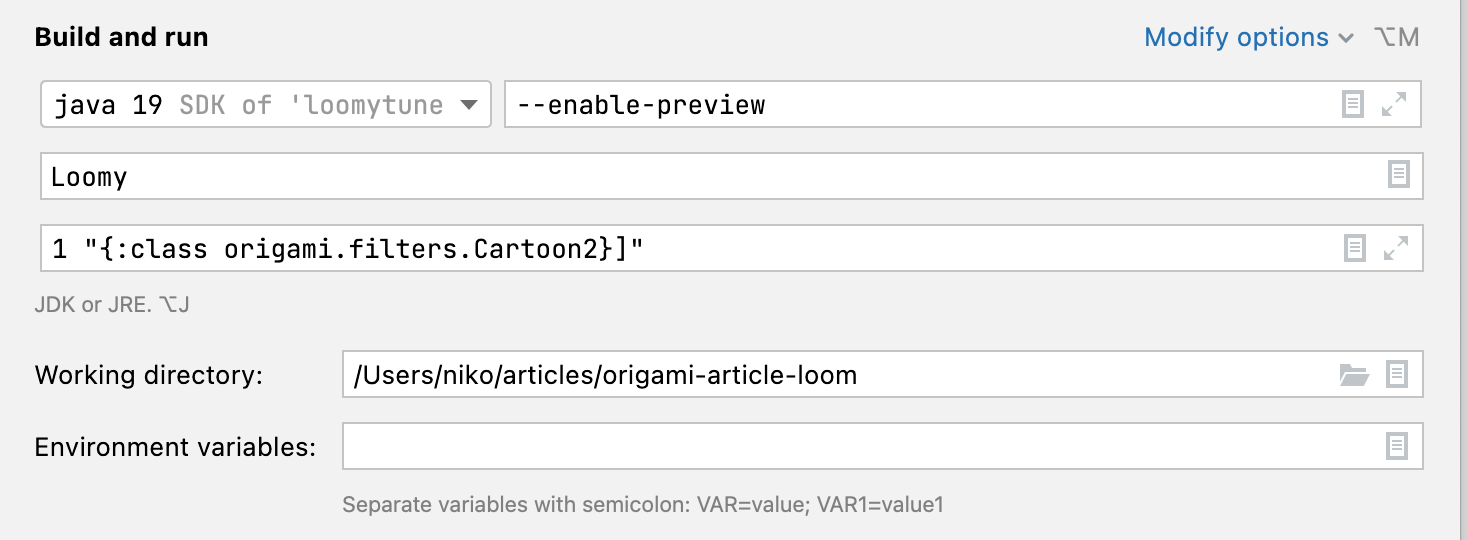

Finally, when running in IntelliJ, the following run configuration comes handy:

That’s for the configuration part, now let’s get down to the coding part.

I Ain’t Need No Thread: Gimme a Loop

Obviously if we want to compare speed, a good reference would be to run thread-less, or in blunt words, a good old for-loop. One of:

private static void loomyNoThreads(File[] images) {

for (int i = 0; i < images.length; i++) {

new OrigamiTaskAsRunnable(images[i]).run();

}

}

or

private static void loomyNoThreads(File[] images) {

for (File image : images) {

new OrigamiTaskAsRunnable(image).run();

}

}

We could call the previously defined process function directly, but for the sake of clarity, and reduce the number of instanciations gap between the different scenario, we will create the Runnable version of the origami task.

For each of the coming test, we compare apply three Origami filters, each with a different requirement for processing power.

- NoOPFilter: doesn’t do anything and just return the image (the mat object) as is. So very lightweight.

- Cartoon2: A visually funny, medium intensive computation task, and,

- ALTMRetinex: A computation intensive image processing filter.

On a blunt for loop implementation, we get the following times.

| Filter | Time (ms) | Images | Avg (images/sec) |

|---|---|---|---|

| NoOPFilter | 52803 | 2164 | 40 |

| Cartoon2 | 119881 | 2164 | 18 |

| ALTMRetinex | 634010 | 2164 | 3 |

Obviously, those times are really hardware dependant, but those will be used as a reference to compare to the other running scenarios.

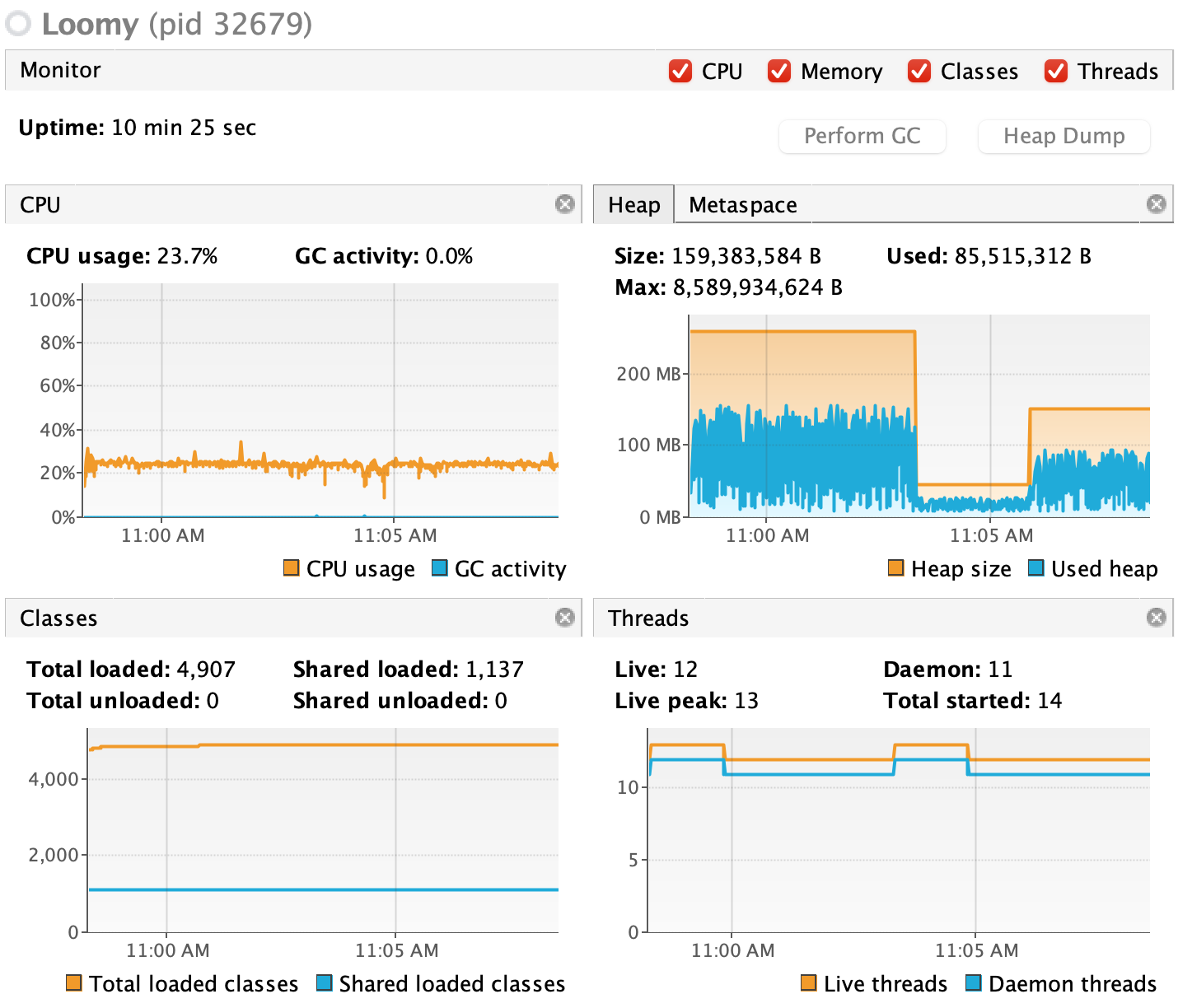

In VisualVM, we also confirm the number of threads in this case is low.

Enough of Loops Wasting My Time: Gimme Some Threads

Well, the last run did take a long time to finish didn’t it? (Especially writing this article, had to re-run 3-4 times …) What about going with something faster?

Let’s rewrite this processing test, this time with a bunch of threads, and this is first case, we’re going all out, not limiting the number of threads we use for the processing.

private static void loomyStandardThreads(File[] images) throws InterruptedException {

var listOfThreads = new ArrayList<Thread>(images.length);

for (int i = 0; i < images.length; i++) {

var t = new Thread(new OrigamiTaskAsRunnable(images[i]));

listOfThreads.add(t);

t.start();

}

for (int i = 0; i < images.length; i++) {

listOfThreads.get(i).join();

}

}

Here are the speed times for the three runs with the above code.

| Filter | Time (ms) | Images | Avg (images/sec) |

|---|---|---|---|

| NoOPFilter | 20969 | 2164 | 103 |

| Cartoon2 | 60027 | 2164 | 36 |

| ALTMRetinex | SIGKILL | 2164 | - |

Well, that was interesting. This did get faster with the first two filters, but the last run with the processing intensive ALTMRetinex filter, the entire JavaVM was terminated by the OS. There has to be a better way …

Well, yes, But Usually We Use Threads From a Pool

Fair enough. Let’s try with a ForkJoinPool and excecute the Origami task, implemented as we have seen before as a RecursiveTask, from a ForkJoinPool.

This gives the code below:

private static void loomyForkJoin(File[] images) {

ForkJoinPool forkJoinPool = new ForkJoinPool();

for (int i = 0; i < images.length; i++) {

OrigamiTaskAsRecursiveTask task = new OrigamiTaskAsRecursiveTask(images[i]);

forkJoinPool.execute(task);

}

forkJoinPool.shutdown();

try {

forkJoinPool.awaitTermination(Long.MAX_VALUE, TimeUnit.NANOSECONDS);

} catch (InterruptedException e) {

e.printStackTrace();

}

}

To make sure the pool executes all the tasks completely, we do call shutdown on it, and await termination.

This time, this gives the times below:

| Filter | Time (ms) | Images | Avg (images/sec) |

|---|---|---|---|

| NoOPFilter | 24564 | 2164 | 88 |

| Cartoon2 | 71395 | 2164 | 30 |

| ALTMRetinex | 368637 | 2164 | 5 |

So by using a forkjoin pool, the scenario did finish, wasn’t killed by the OS with the ALTMRetinex filter and we are almost twice as fast than the standard loop this time.

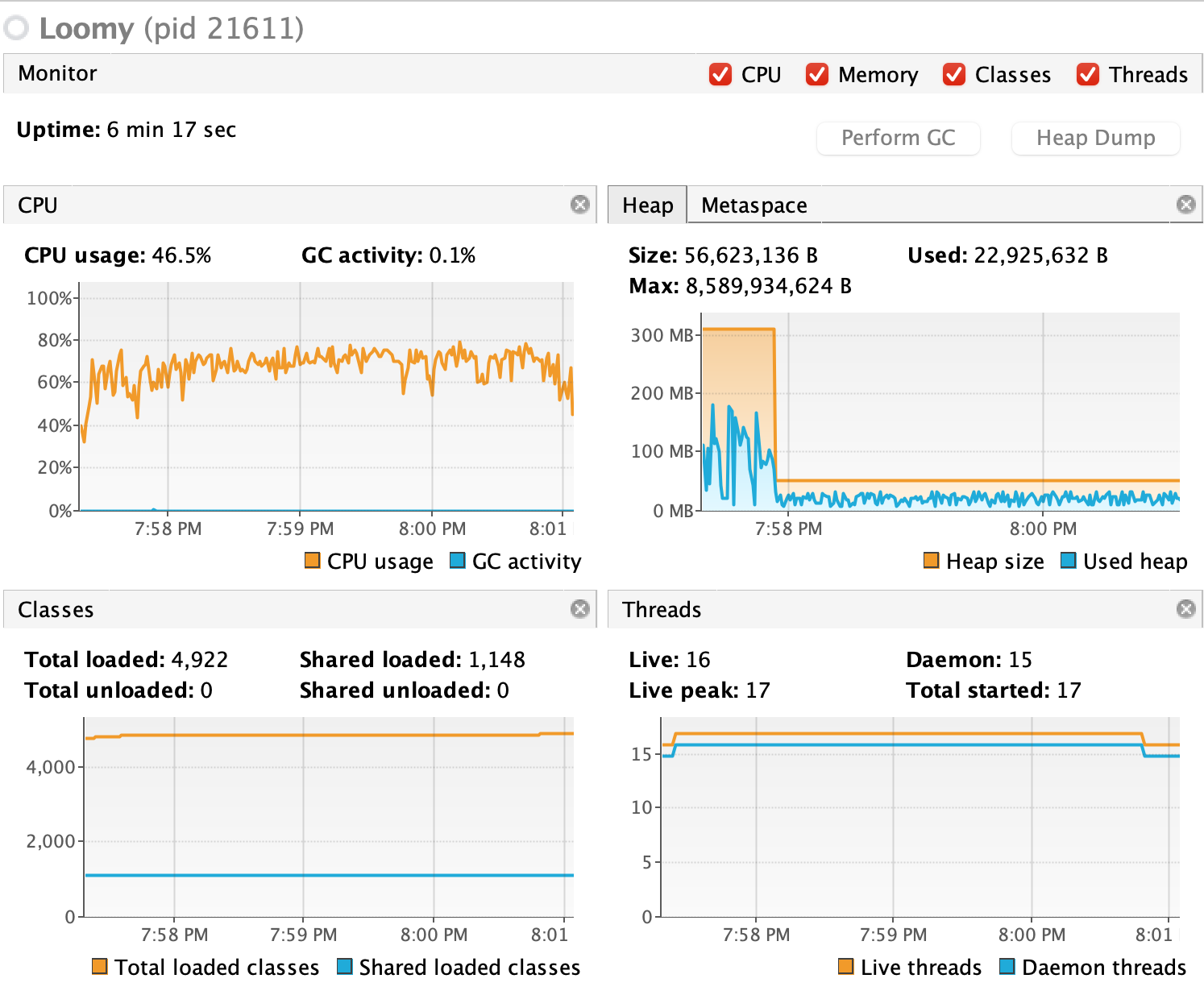

ForkJoinPool uses Runtime.getRuntime().availableProcessors()) as the rule to decide on the parallelism by default, which shows in the Threads section of the VisualVM dashboard. (Here the used hardware has 4 cores)

Correct. But We Still Haven’t Learned Anything New Yet. Where Are Those Virtual Threads?

Indeed. Let’s get down to the core of the article. The new java method from project loom to start a virtual thread is .. Thread.startVirtualThread. And that’s it.

The rest of the code is identical to the previous standard thread example.

private static void loomyVirtualThreads(File[] images) throws InterruptedException {

var listOfThreads = new ArrayList<Thread>(images.length);

for (int i = 0; i < images.length; i++) {

var t = Thread.startVirtualThread(new OrigamiTaskAsRunnable(images[i]));

listOfThreads.add(t);

}

for (int i = 0; i < images.length; i++) {

listOfThreads.get(i).join();

}

}

With the use of lightweight threads, we get the times from the table below:

| Filter | Time (ms) | Images | Avg (images/sec) |

|---|---|---|---|

| NoOPFilter | 19784 | 2164 | 109 |

| Cartoon2 | 61621 | 2164 | 35 |

| ALTMRetinex | 350405 | 2164 | 6 |

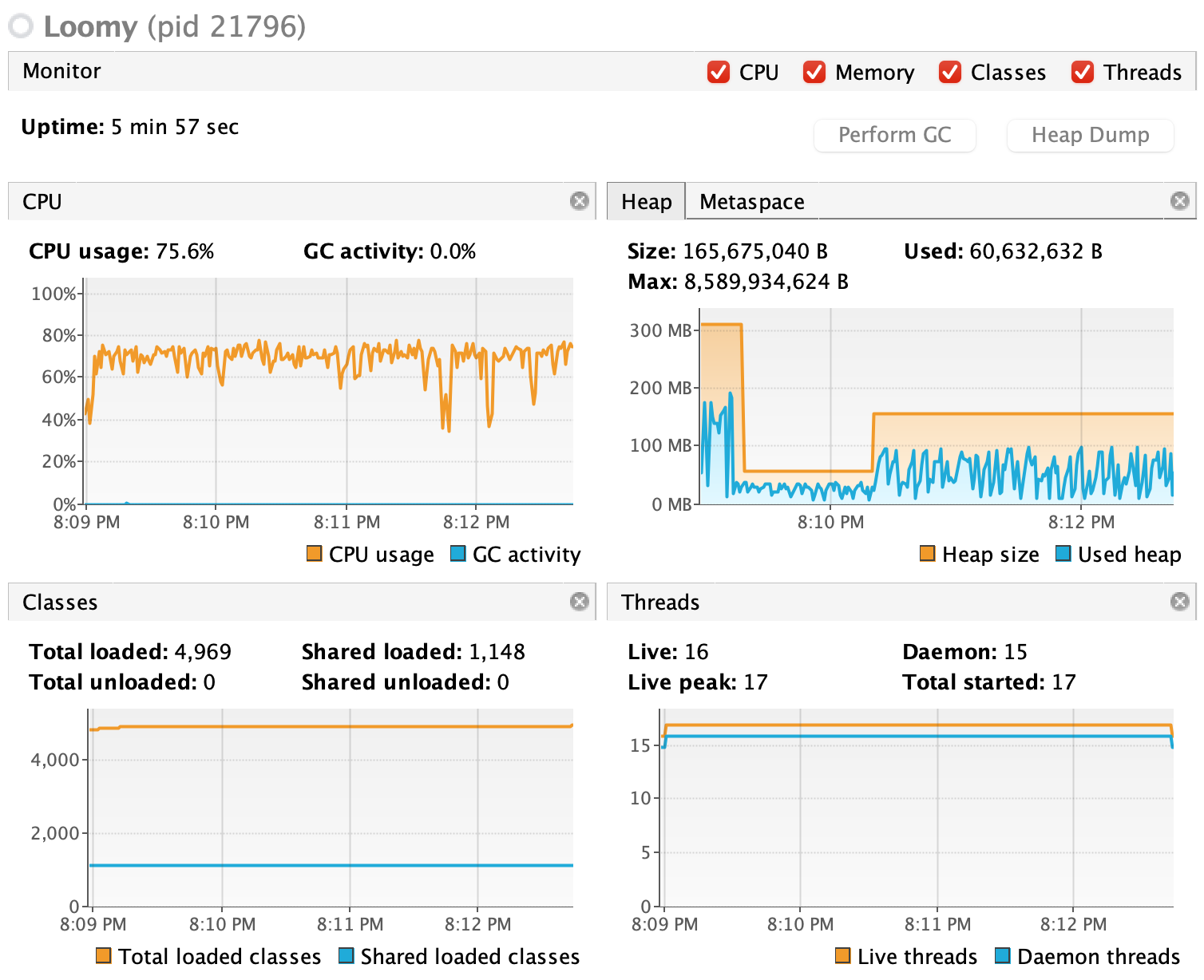

The VisualVM does not look quite different, with same number of overall threads used.

If we look at the stack trace of virtual threads though, we see the new class java.lang.VirtualThread being used.

"ForkJoinPool-1-worker-1" #22 [26639] daemon prio=5 os_prio=31 cpu=30678.23ms elapsed=53.37s tid=0x00007fcf512f4400 nid=0x680f runnable [0x0000700002b86000]

java.lang.Thread.State: RUNNABLE

at org.opencv.core.Mat.nGet(Native Method)

at org.opencv.core.Mat.get(Mat.java:1111)

at com.isaac.models.ALTMRetinex.obtainGain(ALTMRetinex.java:109)

at com.isaac.models.ALTMRetinex.enhance(ALTMRetinex.java:56)

at origami.filters.isaac.ALTMRetinex.apply(ALTMRetinex.java:15)

at Loomy.process(Loomy.java:107)

at Loomy$OrigamiTaskAsRunnable.run(Loomy.java:125)

at java.lang.VirtualThread.run(java.base@17-loom/VirtualThread.java:295)

at java.lang.VirtualThread$VThreadContinuation.lambda$new$0(java.base@17-loom/VirtualThread.java:172)

at java.lang.VirtualThread$VThreadContinuation$$Lambda$122/0x0000000801352470.run(java.base@17-loom/Unknown Source)

at java.lang.Continuation.enter0(java.base@17-loom/Continuation.java:372)

at java.lang.Continuation.enter(java.base@17-loom/Continuation.java:365)

In this scenario, we have learned new things. Actually quite a few things:

- Virtual Threads are easy to use, use the same syntax as developpers are being used to.

- Virtual Threads are actually on par for speed, on light to medium processing tasks

- Virtual Threads are actually well managed, and don’t crash the virtual machine by using too much resources, and so the ALTMRetinex filter processing finished.

Ok, I Got All This About Light Threads, But Can You Give Me Some Priority?

Well, we can try to do that.

This is new scenario implementation is splitting the image processing between two groups of virtual threads, half with full max priority, and half with low priority.

private static void loomyVirtualThreadsSplitArrayAndPriority(File[] images)

throws InterruptedException {

int n = images.length;

File[] i1 = Arrays.copyOfRange(images, 0, (n + 1) / 2);

File[] i2 = Arrays.copyOfRange(images, (n + 1) / 2, n);

Thread t = new Thread(() -> {

try {

loomyVirtualThreadsWithPriority(i1, 1);

} catch (InterruptedException e) {

e.printStackTrace();

}

});

Thread t2 = new Thread(() -> {

try {

loomyVirtualThreadsWithPriority(i2, 10);

} catch (InterruptedException e) {

e.printStackTrace();

}

});

t.start();

t2.start();

t.join();

t2.join();

}

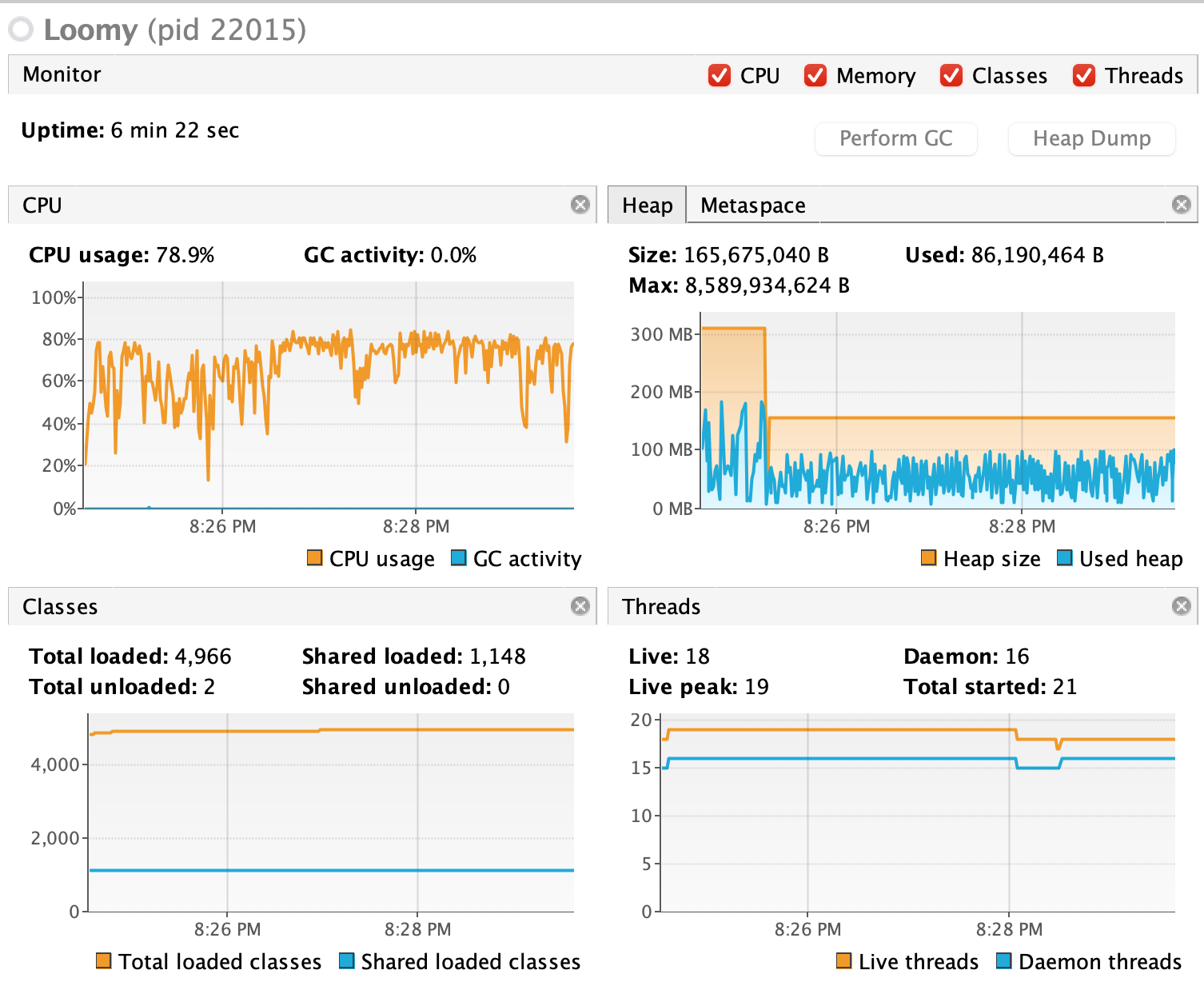

This should stress the virtual threads scheduler a bit it seems.

Results are shown in the table below. The good times we got without messing with thread priority are almost kept but ..

| Filter | Time (ms) | Images | Avg (images/sec) |

|---|---|---|---|

| NoOPFilter | 20393 | 2164 | 106 |

| Cartoon2 | 67989 | 2164 | 31 |

| ALTMRetinex | 350405 | 2164 | 6 |

But wait … we are actually getting almost the same times as without setting priorities to the threads … And the difference is not significant between the two thread groups.

Processing with priority: 10 finished. [1082 images] [19925 ms]

Processing with priority: 1 finished. [1082 images] [20392 ms]

Correct!

That’s because with the current implementation in project loom, “The priority of a virtual thread is always NORM_PRIORITY and newPriority is ignored.”

And so, even if we try to change the priority of a virtual thread, it will stay the same.

public final void setPriority(int newPriority) {

checkAccess();

if (newPriority > MAX_PRIORITY || newPriority < MIN_PRIORITY) {

throw new IllegalArgumentException();

}

if (!isVirtual()) {

priority(newPriority);

}

}

So, even though there is no way to set priorities on virtual threads, it comes as a small drawback since you can spawn an unlimited number of those light threads.

Conclusion

First, obvious, but good reminder news: Origami is fully thread safe.

Second good news, project loom allows you to spawn light thread at will, without the need to be worried about blacking out resources.

Third good news, as you can see in the article’s companion GitHub repo, the whole thing works with Kotlin 1.5.20-RC.

Fourth good news, if you give it a try, make sure to try the directly available Origami DNN filters, like:

- MyYolo$V2Tiny

- MyYolo$V3Tiny

- MyYolo$V4

And see how they work for you!